When we say most AI teams are flying blind, we don’t mean they have no monitoring. Almost everyone has monitoring.

We mean something more specific: they can see their aggregate metrics, but they can’t see the behavioral patterns inside them. And for production AI, the behavioral patterns are where the real information lives.

Here’s what that gap actually costs you — and what it looks like to close it.

Instrumentation vs. observability

These words get used interchangeably, but they’re different things.

Instrumentation is capturing data about your system: traces, logs, latency, cost, error rates. It answers the question “what happened?”

Observability is the ability to understand your system’s internal state from its external outputs — even for conditions you didn’t anticipate. It answers the question “why did it happen, and for whom?”

For traditional software, the gap between the two is manageable. Systems are deterministic. If you know what happened, you can usually figure out why.

AI agents aren’t deterministic. The same input can produce different outputs depending on context, model state, retrieval results, and a dozen other factors. Two users can ask the same question and get meaningfully different experiences. Aggregate metrics smooth over all of that variation and hand you a single number.

That single number is the thing you’re flying blind on.

Three failure modes that are invisible to aggregate metrics

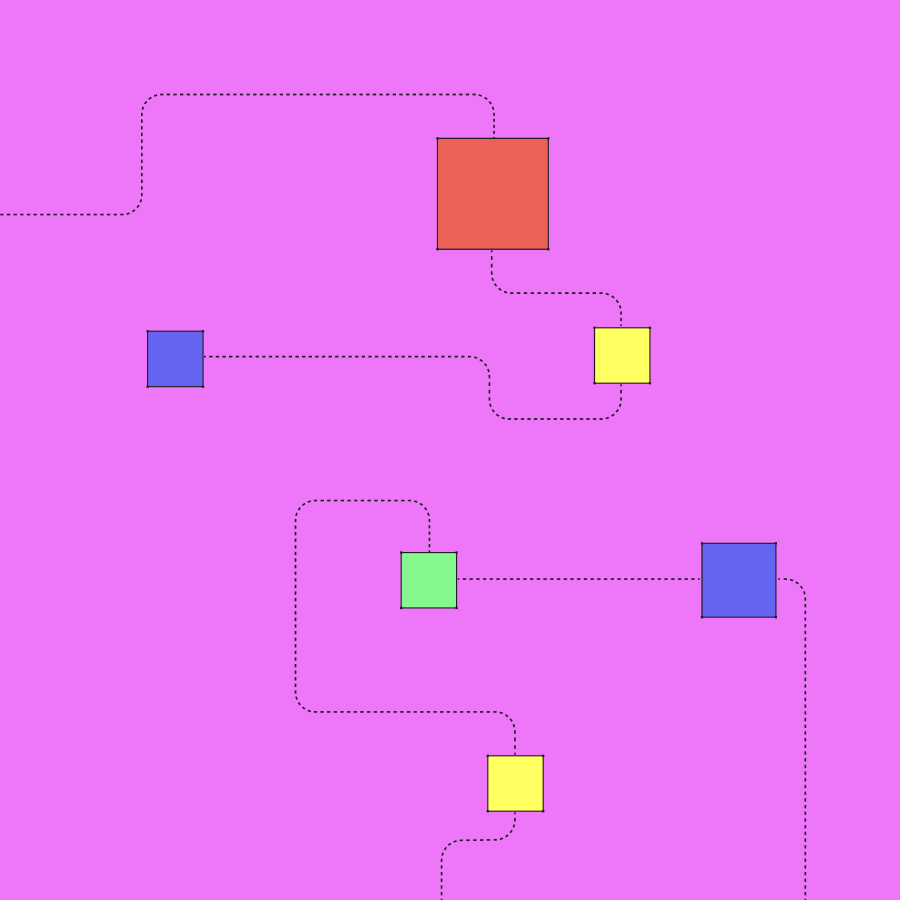

1. Behavioral drift by segment

Your overall quality score is stable at 4.1 out of 5. What you can’t see: your score for first-time users has dropped from 4.3 to 3.6 over the past three weeks, while power users have improved from 3.9 to 4.4. The two trends cancel out in the aggregate.

This kind of segmented drift is extremely common in production AI — and it’s almost always invisible unless you’re specifically looking for it. If you didn’t define “first-time users” as a segment you track, you won’t find it.

2. Unknown-unknown behavioral patterns

Your agent handles customer support queries. You’ve categorized them into billing, technical issues, account management, and general questions. Your per-category metrics look fine.

What you haven’t categorized: the 8% of queries that involve users describing frustration with a specific onboarding flow. They don’t fit neatly into any of your buckets. They get distributed across categories or labeled “general.” Your agent handles them worse than everything else — but because you didn’t know to look for the pattern, you never find it.

Unknown unknowns are, by definition, not findable through predefined segments. You need analytical methods that discover clusters without requiring you to specify them in advance.

3. Gradual degradation vs. hard failures

Alert systems are designed to catch hard failures: error spikes, latency jumps, sudden quality drops. They’re much worse at catching gradual degradation — quality that declines 0.1 points per week, response coherence that slowly worsens as your user base shifts, tool call success rates that drift down across months.

Gradual degradation is often more damaging than hard failures, because it compounds quietly. By the time it’s large enough to trigger an alert, it’s already affected thousands of interactions and potentially influenced user retention.

What behavioral signal discovery actually looks like

Behavioral signal discovery is the practice of automatically identifying patterns in production data without specifying in advance what you’re looking for.

In practice, it means:

Your production traces are continuously analyzed across behavioral dimensions — not just latency and cost, but topic distribution, response characteristics, tool call patterns, quality scores, user context. Unsupervised analysis finds clusters of similar behavior. Some of those clusters correspond to known segments. Others are new.

When a new cluster emerges — say, a set of queries that share a topic, a failure pattern, and a user context you hadn’t previously modeled — it surfaces as a signal. You can inspect the exemplar traces, understand what the pattern represents, and decide whether it warrants action.

The key difference from traditional monitoring: you didn’t have to know what to look for. The analysis found the pattern in your data and brought it to you.

The question worth asking

Most AI teams, if you ask them “Do you have observability?” will say yes. They have tracing. They have dashboards. They have evals.

The better question is: “Can you explain why your agent performs differently for different users?”

If the answer is “yes, for the segments we’ve defined” — you’re at Stage 2. The patterns you haven’t defined are still invisible.

If the answer is “yes, and we continuously discover new segments we didn’t anticipate” — you’re approaching Stage 4. You’re not flying blind.

Most teams, if they’re honest, are somewhere in the middle. The first step is knowing exactly where.

Find out where your team stands — 2-minute assessment → distributional.com/assessment